Earlier this week I installed a fairly significant update to the iPhone’s operating system iOS 13.4. Whilst it included new features such as iCloud Drive folder sharing, and improvements to the Mail application amongst others, it’s a pity Apple so infrequently improves accessibility features between annual operating system updates.

I have been waiting six months for the tech giant to improve access to my iPhone, including support for UK English in Voice Control dictation; to toggle the Auto-Answer feature on and off with Siri; and the ability to hang up phone calls by a voice command as I can with my smart speakers at home, (including the HomePod).

For macOS users Apple also released a new update this week, and it was encouraging to see it included new head pointer support. You can set it up to move the pointer with head movement and left/right click and drag with eyebrow, smile and tongue gestures (all configurable).

I am quadriplegic, as a result of muscular dystrophy, which means I have difficulty using an iPhone screen for writing an email, sending a message, posting to Facebook and Twitter, or controlling my smart home. Rather than typing on to a screen I rely on voice recognition technology to get things done.

Last year Apple introduced Voice Control,which enables greater control of the iPhone with voice commands. But as I have discovered over the last six months it is still a work in progress and there are still so many features and significant changes needed to make the iPhone more accessible and useful.

There are loads of general things I would like to see in iOS 14 when it is released later this year, (multiple timers would be good, for example), but many are small tweaks not worth making a fuss about. Here’s a list of the five biggest and most far-reaching accessibility features I hope to see in iOS 14.

Note: I have a severe physical disability with normal speech. Therefore, these are the accessibility features I’d like to see for people who want to control more of their iPhone with their voice.

1) Better Voice Control and dictation

Voice Control is Apple’s biggest accessibility initiative ever. It is designed for people who can’t use normal methods of text inputs on an iPhone and it has two main functions; it allows people to dictate emails or messages with their voice, and secondly navigate their screen with commands such as “open Safari” and “quit Mail”.

Voice Control is good for navigation by voice commands but not very good at accurate dictation, which makes it frustrating to use. It does not allow you to “dictate seamlessly into any text box”, as Apple claims. It’s a sad indictment that as a quadriplegic I do not use it all as the application constantly fails to convert my spoken words into text correctly.

Some of Voice Control dictation’s poor performance may be due to the fact that only US English is powered by the Siri speech engine for more advanced speech recognition. Apple hasn’t said when UK English will be added but I am hoping it will be available with the release of iOS 14 later this year.

Better and more accurate dictation will require new hardware, such as more advanced far field microphone technology, but it will also require smarter software such as that demonstrated by Google and its next-generation assistant on the Pixel 4.

In comparison to what Google offers Apple dictation is so slow and inaccurate that most people don’t bother using it. It can’t keep up with a normal talking pace, and it doesn’t do a very good job of making a sentence out of a string of dictated words.

Apple really needs to up its game in iOS 14 with a beefed-up speech-to-text engine, which makes dictation significantly faster and more accurate across a range of accents.

2) Smarter Siri

Access to intelligent assistants like Siri is key to mainstream consumer appeal, and for physically disabled users who have difficulty handling an iPhone the hands-free voice capabilities that Siri now offers is so liberating.

Wearable on-the-go products like Airpods connected to my iPhone lets me take Siri with me all day. I can go out alone in my wheelchair and feel secure by being able to make phone calls from my iPhone with a Siri voice command. I can send friends and family a text message. In these small but significant ways Siri has allowed me to interact with my iPhone almost like anyone else. The benefits for me are both in terms of personal safety, and social interaction.

However, Siri still needs a lot of work. It still lags way behind Google Assistant and Alexa in its ability to answer general questions and elegantly perform actions with third-party hardware and services.

The iPhone too is, well, a phone and, for many people, that remains its core function and yet you still can’t use a Siri voice command to end/hang up a phone call. You can place a call hands-free by a Siri voice command but you can’t end it. This undesirable situation causes me so many problems every day, as I get stuck in people’s voicemail boxes, when they don’t pick up my calls, because I am unable to touch the iPhone screen to press the red button to end the call.

Voice Control is a joint effort between Apple’s Accessibility Engineering and Siri groups. Its aim is to transform the way physically disabled users access their iPhones. You talk, it gets things done. Yet on a broader level Apple chose to bring in Voice Control as a dedicated accessibility feature, but it could have done very similar things by expanding the capabilities of Siri as outlined above so better more effective voice control can be for everyone. This is a more inclusive approach and what I would like to see from the company this year.

With iOS 14 I want Apple to really enhance Siri and recognise its huge potential for accessibility purposes. I want an all new Siri that offers a whole new digital assistant experience. One that is faster, smarter, better understands both your words and your intent, and is more proactive about doing things on your behalf. I want Siri to be pervasive across Apple devices – the iPhone, the Watch, and Mac computers.

3) Auto-Answer

Auto-answer is a little known feature buried deep in the accessibility settings on iPhones that enables phone calls to be answered automatically without the need to touch the iPhone screen. It is really useful for anyone who can’t easily reach for their iPhone and touch the green answer icon on the screen when a phone call comes in.

However, the problem is that for the people who rely on it as the only option for handling phone calls, you still can’t toggle Auto-Answer on and off by a Siri voice command, or create a Siri Shortcut where, for example, every time you put your AirPods on, phone calls are answered automatically, and every time the earbuds are taken off they are not. This would be very useful for users in my situation. Most other accessibility features can be activated by a Siri command. Hopefully, this no- brainer will be addressed by Apple in iOS 14.

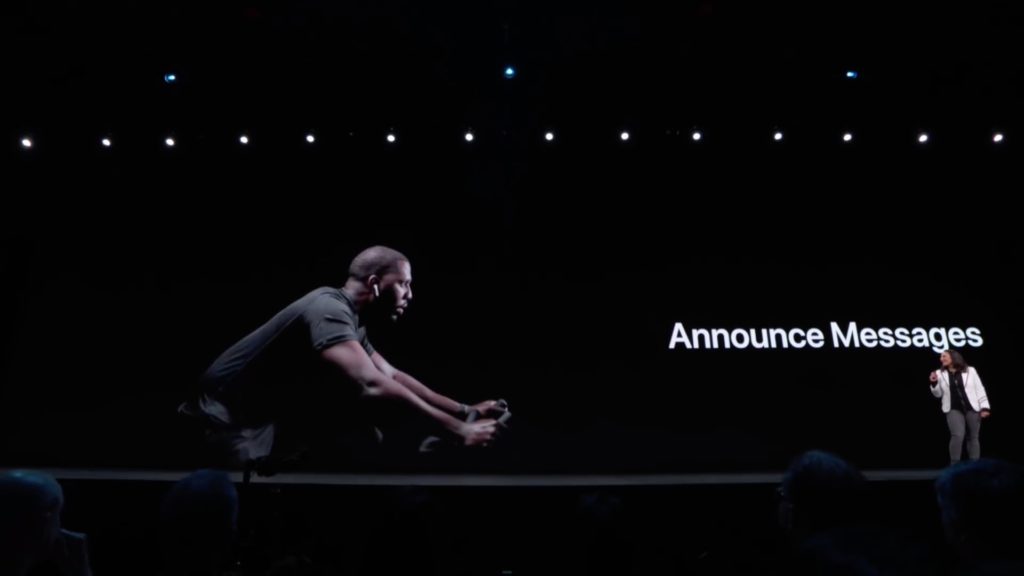

4) Announce Messages with Siri

Announce Messages with Siri is a great feature that came to iOS 13 last year, which enables anyone with Airpods to have their messages read out to them by Siri automatically, and the ability to transcribe with a reply. It has a lot of benefits for people who have difficulty interacting with their iPhone screen. I find the feature very useful and it will become even more useful when other messaging services, such as WhatsApp and Facebook Messenger, become integrated. Apple really needs to release an API in iOS 14 so other developers of messaging services can make use of it. This will really widen access on the iPhone.

5) Accessibility menu

The accessibility menu got a big update last year in iOS 13 moving up to a more prominent spot — the main Settings page. In doing so, accessibility was also cleaned up and organised, so there are more sub-menus.

Perhaps, understandably, Apple puts all that it offers people with disabilities under one menu. However, not all disabilities are the same and it can be frustrating if you have a physical and motor disability to have to scroll down past settings for people with visual impairments to get to the settings you need. If you use the Auto-Answer feature or AssistiveTouch it is quite a lot of scrolling and a few clicks to get to where you need to be. I’m not sure what the rationale is with the current hierarchy where visual comes first and then physical but in iOS 14 Apple should make the hierarchy of the accessibility menu completely customisable to the user’ s own disability.

Your iOS 14 wishlist?

Last year Apple introduced a tonne of new features and functionality across the iPhone. But it came at the price of stability with bugs still being ironed out six months on. This year, when it comes to accessibility, there is not expected to be a lot of significant new features as the company doubles down on performance but what it can do is improve and refine existing features and functions that will make a real difference to users.

I’ll have some more suggestions in the coming months but, for now, I want to hear your suggestions. When it comes to accessibility what do you want to see in iOS 14 in 2020?