Apple operating systems and apps face various performance and reliability issues: AirPlay reliability when connecting multiple HomePods, a HomeKit bug that can kill iOS devices, and more…

However, what no one, (apart from disabled users), ever talks about is how buggy, unreliable and inaccurate dictation is in Voice Control, the company’s flagship speech recognition application for disabled people with upper limb mobility impairments.

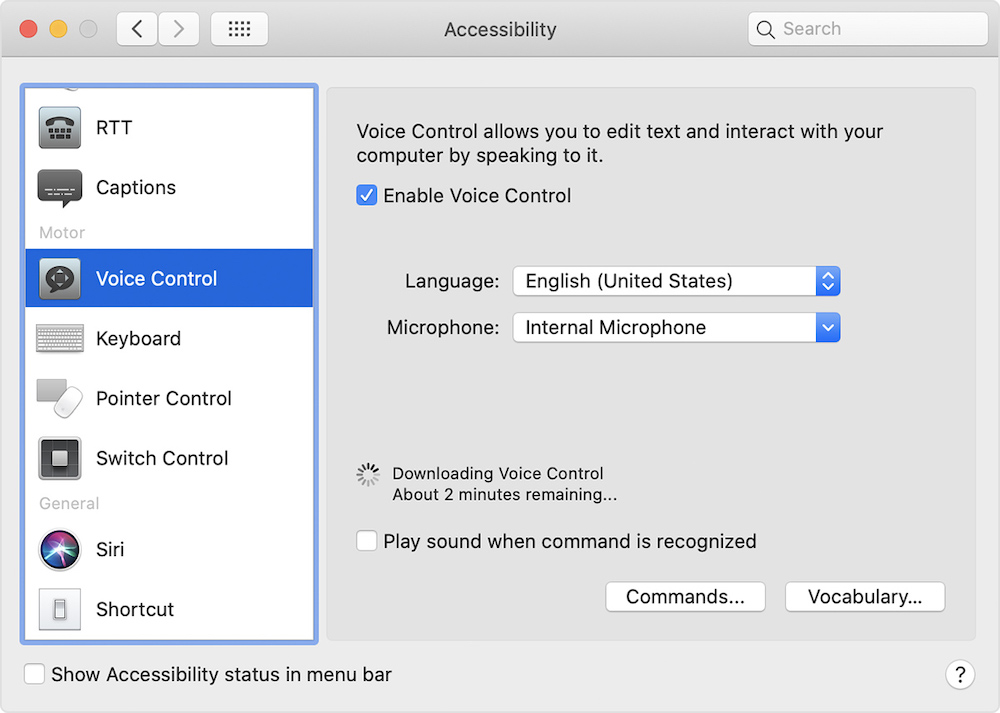

With Voice Control, Apple says you can navigate and interact with your iPhone, iPad or Mac computer using your voice to tap, swipe, dictate and more.

The application was unveiled in 2019, to much fanfare on stage at Apple’s WWDC 2019 conference for developers and this was just a few months after Nuance removed support for its then leading Dragon speech recognition application for the Mac.

The tech press reported positively on Voice Control and disabled people had good reason to hope the accessibility app, built by Apple and baked into its operating systems on the Mac, iPhone and iPad, would really and truly transform the lives of anyone who needs to control their gadgets with only their voice.

However, more than two years on from launch, dictation with Voice Control is maddeningly and frustratingly poor in macOS Monterey (and iOS 15 and iPadOS 15) and Apple has done nothing to improve it. As a tool to help disabled people post to social media, study, and hold down jobs it’s next to useless.

Can a $3 trillion company do better? Damn right it can. Here are some of the major problems users face…

Garbled grammar

Voice Control doesn’t always take into account the grammar of many sentences e.g. dictating the word “will”often leads, incorrectly, to capitalisation of “Will” as in the man’s name. This is annoying and not productive. It’s intermittent but happens especially if you pause dictation. It happens with other words too, which slows you down as you continuously have to clear up dictation errors.

Misquoting

When dictating the command “open quotes” a space isn’t automatically inputted after the last word dictated so dictated text appears like this: the quick brown fox” jumped over the fence”. It’s incorrect formatting with the quotation mark hard butted to the last word dictated and requires going back and editing and inserting a space after the last word dictated (fox) and the open quote mark immediately before jumped. This should happen automatically when dictating. It does in other speech recognition apps.

Misfiring emoji

It’s a similar situation with dictating emoji. No space is inputted after the last dictated word so dictated text appears as…”the quick brown fox jumped over the fence👍“

When dictating in the macOS Mail app a space isn’t inputted after the last word dictated (fence) and the emoji. It looks very unnatural and requires going back and entering a space manually. It should happen automatically. Very unproductive. Apple made a virtue of showing dictating an emoji in a film on the stage at WWDC 2019 to warm applause but it hasn’t been implemented properly.

Doesn’t work with WhatsApp

Voice Control dictation doesn’t work in the WhatsApp text box in the app downloaded from the company’s App Store on macOS. Why does Apple allow apps in the App Store that don’t support basic accessibility requirements such as a popular messaging app used by billions every day not supporting Voice Control dictation?

This sort of thing should be a minimum requirement for the App Store. Is accessibility part of Apple’s now infamous App Store policies? If not, it is time it was as disabled people’s access and life chances socially, in education and work are too important to ignore.

Dictating text into the WhatsApp Web text box in Apple’s web browser Safari on a Mac sometimes leads to the web page freezing and only shutting Safari down and relaunching will fix the issue. You lose all the text you were dictating, which is very inconvenient. It often happens if you pause dictating.

Works in some browsers but not others

Voice Control dictation doesn’t work with third party browsers like Firefox for Mac. Why? Voice Control users can’t dictate into any text boxes in the Firefox for Mac browser, including WhatsApp Web. This may well be a Firefox issue but surely Apple can bring its considerable influence to bear on this unfortunate situation. Things aren’t much better in the Google Chrome browser on the Mac either. Disabled users are being failed by this lack of access.

Works in some text boxes but not others

Frequent dictation errors aside, whilst Voice Control works well in Apple’s own applications such as Messages, Mail, and Pages it’s a lottery which third party application text boxes will work with speech recognition apps more generally. Some of the biggest platforms in the world, Facebook, Twitter, and WhatsApp included, who talk big about their accessibility credentials, have text boxes that aren’t accessible to speech recognition apps.

Not being able to dictate in a number of text boxes on very high profile platforms is the digital equivalent of a restaurant, shop or public building refusing to provide a ramp for a wheelchair user. Unacceptable and in a number of countries illegal these days.

Speech recognition apps blame the platforms and the platforms blame the speech recognition apps but it is the disabled user that is denied access and therefore silenced.

Takes over

The “delete that” command redundantly takes over the command for “delete character.” You can only delete a whole word once with the “delete that” command. After deleting a whole word once if you say “delete that” for a second time Voice Control only deletes the last letter of the last word dictated.

This isn’t helpful to the user as often if there is a recognition error it maybe two or three words so why can’t you say “delete that” two or three times and have whole words deleted? Deleting only the last letter of a word serves no purpose for the user. Much more useful is the ability to delete whole words several times as you say the “delete that” command. There is the option to delete whole phrases but sometimes “delete that” is even easier. Users just need the ability to use “delete that” more than once.

Doesn’t recognise foreign names

Voice Control often doesn’t recognise foreign names. It would be great if the app offered a custom contact pronunciation feature which would get round this problem. Google offers this on Android phones. I’m sure something similar could be applied to Voice Control on both a Mac and iPhone.

The frustrating thing is that the names are already in the OS in Contacts but dictation doesn’t recognise them and this solution would get round that problem.

Voice Control isn’t smart

More broadly, the Dragon speech recognition app for Windows computers provides the ability to train words so the same dictation fails don’t occur repeatedly. This is a feature that would enhance Voice Control dictation and make it more productive and less frustrating to use. Voice Control is an application that should improve with use and learn from mistakes. Repeating the same dictation errors over and over is extremely tedious. Very crude, and un-Apple like in my opinion.

A microphone for dictation

Apple could improve the dictation experience with Voice Control by releasing a microphone especially for speech recognition. A small USB mini table mic would be perfect for the job. Quality speech microphones play a crucial role in achieving accurate voice dictation, especially in moderately noisy environments. Apple says little about microphones in its support documents for Voice Control, suggesting all the magic is in the app and all you need are the internal microphone in a Mac, or the company’s AirPods to excel at accurate voice dictation. Nothing could be further from the truth as anyone who has tried dictating this way will testify.

Disabled people need and want more

Despite this take down overall Voice Control is a great app and it’s to the company’s immense credit that the tech is bundled in free with every Apple Mac, iPad or iPhone. Help with navigation, and custom commands are powerful features, but the user experience is severely compromised by the limitations and poor accuracy of long form dictation on the Mac in emails and documents,

Disabled people need and want more than the ability to dictate a short “Happy birthday❤️“ iMessage with a cute emoji.

The numerous bugs and shortcomings may sound niche, affecting a small number of users, but disabled users deserve the right to communicate easily and effectively with friends and family on social media, and to excel in education and employment with the help of good quality speech recognition.

It could also be argued there’s a powerful mainstream appetite for improved and more accurate speech recognition on the Mac from people with dyslexia, RSI, and professionals like doctors, lawyers, and surveyors. Make dictation great for disabled users and you make it great for everyone. That’s a powerful selling point.

Speech recognition competition

It’s an interesting time for speech recognition more widely. Microsoft has bought the leading speech recognition company Nuance and its Dragon product for Windows computers and is putting its new acquisition to work immediately with its new Voice Access app currently undergoing user testing in the Windows Insider programme.

Google has also significantly improved speech recognition on the new Pixel 6 phones with the Tensor chip which uses machine learning to drastically improve the natural language processing for speech-to-text. Hopefully, the company will bring the same tech to its Pixelbooks.

Faced with such competition Apple really needs to up its game when it comes to speech recognition.

Hello, Apple, is anyone out there?

The bugs, shortcomings and feature improvement suggestions mentioned in this post have been reported to Apple many times over the last couple of years and nothing has been done.

Let’s hope 2022 will be the year the company listens and finally shows Voice Control dictation on the Mac some❤️ It’s long overdue.

Are you satisfied with the accuracy of dictation with Voice Control? Let me know in the comments section below.

iangilman

Colin, thank you for documenting these issues, and more broadly speaking about Apple’s voice control! I’ve just newly switched to voice control from Dragon, and I’m interested in connecting with community of people using it. Are you connected with or aware of such a community? If not, would you be interested in helping to start one?

At any rate, thank you for all of the information!

Colin Hughes

Hi Ian, thanks for your reply and many apologies for the delay in getting back to you. I’ve just picked up on your comment. I’m sorry I’m not aware of a community of Apple Voice Control users but I think a community is a good idea and may help shape and influence Apple’s improvement of the application, particularly when it comes to long form dictation. Dictation with Voice Control could and should be better than it is.

iangilman

Awesome! Thank you for getting back to me 🙂

I’m happy to start something… what would you think about Discord as a place to begin?