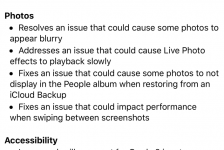

After three months of beta testing over the summer Apple has released iOS 14 to the public. It includes a range of new features, including widgets on the Home screen, an all-new App Library, and much more.

Having tried the software over the past couple of weeks this is my take on the good, the bad and the work still to do to make iOS 14 and the iPhone accessible for people that have a difficult or impossible time using their iPhone with their hands.

I have a severe physical disability, muscular dystrophy – a muscle-wasting disease which leaves me effectively quadriplegic, unable to do much with either arms or legs. I rely on my voice to control and interact with my Apple iOS gear.

Phone calls

iOS 14 introduces a minimised phone call pop up that doesn’t take over the entire screen, which iPhone users have been calling for for years. Incoming phone calls are now shown in a notification banner rather than a full incoming phone call display like in iOS 13.

The feature also works with FaceTime calls and Apple has created a new API for the compact call interface that will let developers incorporate the feature into third-party apps like Facebook Messenger, WhatsApp, Skype, and more.

However, when it comes to handling phone calls for people who can’t touch the iPhone screen to press the green and red call buttons, whether minimised or not, there have been no new improvements in iOS 14.

Accessibility settings on iPhones have offered an auto-answer capability for handling incoming phone calls since iOS 11. It’s perfect for people who can’t touch the iPhone screen to answer a call. But you can’t ask Siri to switch on this functionality with your voice: so a feature most useful for people who can’t touch a screen requires you to … touch a screen to enable it.

There are not only problems with answering phone calls there are also issues with ending them. Using Siri on iOS devices, users can place a call by saying “Hey Siri, call…”, but there’s no “Hey Siri, end call” command. This means that if you call a number and go to voicemail, you have to wait until the mailbox times out before the call ends – which is frustrating for both parties. I have lost count of the number of times I call someone and end up in their voicemail box, and there is nothing I can do to end the call because I can’t press the red button on the iPhone screen. The sense of powerlessness is very frustrating.

Always conscious about privacy, perhaps Apple is reluctant to allow Siri to handle phone calls but I think for accessibility there should be the option, with appropriate warnings that it will perhaps compromise your privacy, to activate such a feature. At least then users can make a balanced and informed choice between independence and privacy.

Or better still give Siri similar capabilities to the Google Assistant’s feature, Voice Match, which can recognise the voices of up to six different people. This voice-based security works without sacrificing convenience or accessibility. This will go a long way to prevent the dreaded Siri message when you ask the assistant to do anything when your iPhone is locked: “You’ll need to unlock your iphone first”, which is a huge barrier to accessibility and independence.

Messages

iOS 14 brings a number of improvements to Messages including pinned conversations, group photos, mentions, and in-line replies.

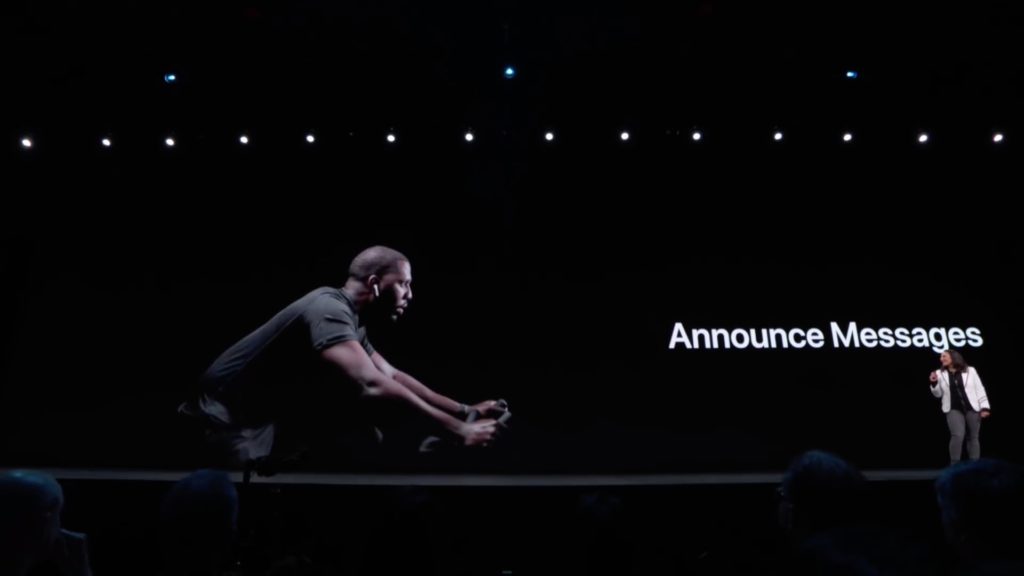

When it comes to sending and receiving messages on my iPhone I have found Announce Messages with Siri, since it was introduced in iOS 13, transformational.

Apple doesn’t class Announce Messages as an accessibility feature but it has had a huge impact on my life. I can go out for a walk in my wheelchair on my own with Airpods in my ears, and think of a friend, and spontaneously text them and hear their reply. I have never been able to do this before – and you can’t put a price on that level of independence.

However, in iOS 14 Apple hasn’t expanded the feature to include other messaging services such as WhatsApp and Facebook Messenger. Announce Messages with Siri only works with iMessage at the moment. It means if I receive a WhatsApp or Facebook message I don’t have the same interaction possibilities and this lessons independence.

I would like to see Apple open Announce Messages with Siri to other messaging services. It would be great for accessibility reasons to have all messages that come in on your iPhone read out to you in your Airpods, and the ability to dictate a reply on the hoof.

For some this will be convenient, but for others essential. When Apple first launched Announce Messages it looked like it was going to release an API for other messaging services but sadly this has not yet come to pass.

Siri

Siri got a boost in iOS 14 with a compact new design that does not take up the whole screen when you summon the voice assistant. Apple says Siri has 20 times more facts than just three years ago and you can send audio messages with Siri now, which is great and makes messaging more personal. Third-party messaging apps are supported through SiriKit though I am yet to see other messaging apps integrate more tightly with Siri yet.

![]()

For people with physical disabilities Siri is an important way to get information and get things done so the more useful Siri can become the better. As previously mentioned, it is a pity that there are still many fundamental things Siri can’t assist with such as turning on and off the auto answer feature, and ending phone calls. You wouldn’t hire a secretary who couldn’t handle your phone calls so Siri has a way to go until she can become my intelligent personal assistant.

Airpods

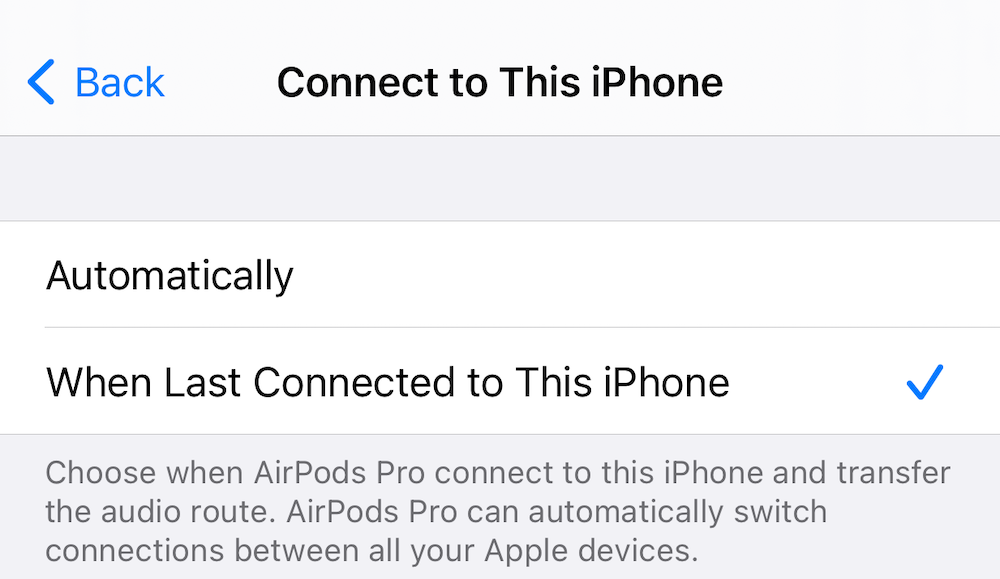

iOS 14 comes with several new features that improve how the AirPods and the AirPods Pro work with iPhones and other Apple devices, including spatial audio, automatic device switching, battery notifications, and Headphone Accommodations for those who need help with sounds and frequencies.

Following a Airpods firmware update to coincide with the release of iOS 14 Airpods will now automatically switch between your iPhone, Mac, iPad and Watch, paired to the same iCloud account. In theory, this makes it even easier to use your Airpods with your Apple devices during day-to-day use. You can be listening to music on your iPhone, move to watch a video on your Mac, and the Airpods will be ready with the audio.

I say in theory because I have experienced issues with automatic switching. I have several Apple devices in my home, two iPhones, a Watch, and a MacBook all on the same iCloud account. I can’t pick up and touch my devices regularly to trigger the automatic switching and so when my care worker puts my Airpods in my ears they don’t necessarily connect to the device I want them to connect to, and if I leave home they won’t automatically connect to the Apple device I have with me so I have found myself disconnected from my Airpods because they haven’t automatically switched to the device I want them to, which is a showstopper for me. Fortunately, there is a way to disable automatic switching by selecting “last device used” in Airpods settings on the iPhone.

As a result of the iOS 14 Airpods firmware update Always On Siri will now work with the Airpods Pro when lying down in bed. In June, I contacted Apple to let them know that if for reasons of illness or disability you found yourself spending a lot of time lying down in bed Always On Siri wouldn’t work when wearing Airpods. Following the release of iOS 14, and the new firmware, Always on Siri now works when lying down. Unfortunately, the functionality doesn’t work on the second-generation Airpods. This new Airpods Pro functionality is probably a byproduct of the spatial audio feature, which tracks your head movement using accelerometers and gyroscopes in the Airpods Pro.

Voice Control

Voice Control, Apple’s speech recognition app for people with physical and motor disabilities, got an update in iOS 14 and now supports UK English, which as a Brit has improved the accuracy of my dictation. The company has also cleared up a serious bug that prevented users dictating accurately into the Google search box in Safari, and many other text boxes on prominent websites around the world. These two developments improve Voice Control but dictation accuracy remains a problem and it is still a frustrating experience overall when using the application.

Voice Control on the iPhone has a long way to go until it can match the new capabilities of Voice Access in Android 11 which are incredible. Now able to understand screen context and content you don’t have to use a grid or button numbers, you can just say what is on screen to get things done. For people that can only interact with their phone via their voice, it’s a game-changer on phones like the new Google Pixel 5.

iOS 14 Verdict: Room for improvement

Whilst many of the mainstream improvements in iOS 14 are proving extremely popular with iPhone users such as Home screen widgets, app library and compact phone calls, Apple still has some way to go to make iOS 14 and the iPhone fully accessible to people who can’t interact with the iPhone screen with their hands.

Whilst I am loving many of the new features, they are fairly meaningless if the accessibility isn’t there to do the basics like calling and messaging. The struggle I have to access my iOS gear every day has not fundamentally changed as a result of the release of iOS 14, and the problems start to add up to a highly frustrating experience day-to-day. Shortcomings aren’t down to just bugs or poorly implemented features. Rather, I feel it is part of a larger problem. It appears to me that Apple doesn’t fully understand how people with limited mobility want to use their iOS devices.

The failings I am highlighting won’t bother many people. Most are no doubt enthralled with the Home screen widgets and the app library but they are incredibly important for physically disabled people, and they are things I have been campaigning for the past couple years. Having them can literally make or break my day, my life even.

Apple should consider why it needs both Siri and Voice Control. The company should make Siri more powerful by including all the Voice Control features. In that way, there would only be one voice technology to manage, and because Siri is a mainstream technology, it may get more of a priority than a feature used by a small minority of Apple customers. This is the sort of inclusiveness I would like to see the company take going forward.

To be fair to Apple it is worth acknowledging that the company did introduce several accessibility improvements for a range of disabilities in iOS 14, including improvements to the VoiceOver screen reader, a new Back Tap Feature, sign language in FaceTime calls, and Headphones Accommodations to help you hear better.

However, I’m left with the impression that the company felt that after the introduction of the somewhat limited Voice Control last year, they have done enough for people with physical and motor disabilities for the time being, and iOS 14 was not the year to bring in any more improvements in this area. If that’s the case that is disappointing because there is still so much work to do.

Following interviews I did with 9 to 5 Mac and The Register over the summer I had a chance to have a brief discussion with an insider at Apple and was told all the points I’ve raised are things folks are looking into and/or working through at the tech giant.

With this in mind, I think Apple would be wise to listen because even if you can use your iPhone with your hands, improved voice access will be popular with many people for those times when you’re busy doing something else and need to do something on your iPhone.

Here’s hoping that over the next 12 months Apple will act on the feedback I and others have given them.